If you haven’t read the first blog post yet, I highly recommend starting there — it lays the foundation for what partitioning is and why it matters.

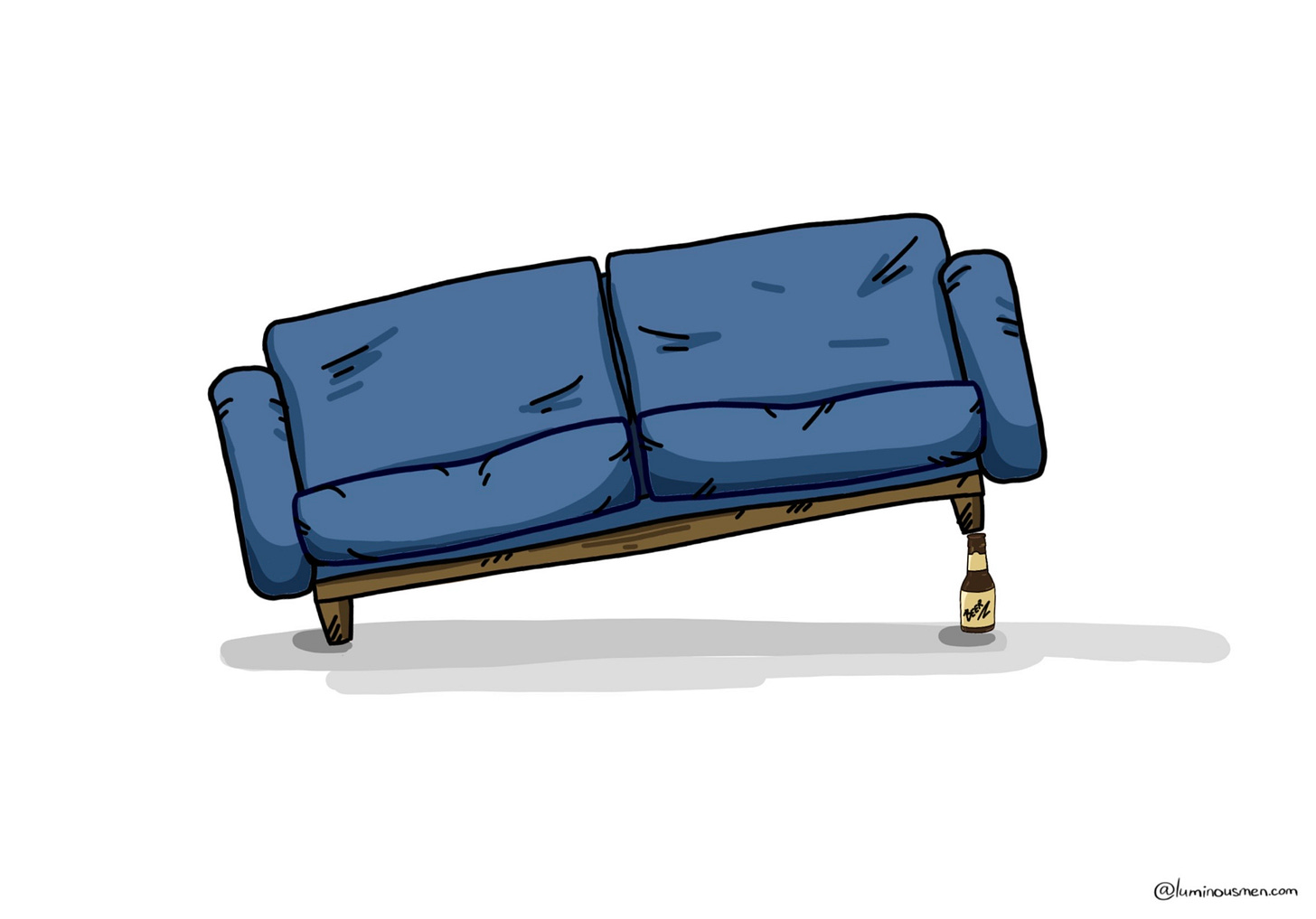

Ever tried moving a giant couch into a tiny apartment?

You eyeball the hallway. "Yeah, it'll fit"

You try turning it upright. "Nope"

You remove the door. "Still no"

Two hours later, you're still trying to wedge it in, sweating and cursing.

That's what bad partitioning feels like.

Partitioning is half science, half black magic, but like everything in engineering, a few good practices can keep you from shooting yourself in the foot. So let’s dig in.

1. Understand Your Workload

First rule of partitioning: design for your actual workload, not the one you wish you had. Blindly partitioning without knowing your data access patterns is how you end up solving the wrong problem — and adding new ones for free.

Before you touch a schema sit down and ask yourself the following questions:

Read vs Write Patterns

Do writes need strict ordering (like event logs, telemetry), or can they be spread across shards? Strict ordering may force all writes into a single partition, which can become a bottleneck. If your workload allows looser consistency, you have more flexibility in distributing the load.

Are you mostly reading, mostly writing, or doing both all the time? Write-heavy workloads may require different strategies than read-optimized ones. For instance, systems that ingest large volumes of telemetry data benefit from append-optimized, sequential writes. In contrast, read-heavy applications like recommendation engines might favor partitions that support fast lookups.

💡 A recommendation engine might need to frequently retrieve a user’s recent activity, preferences, or interactions. If that data is partitioned by user ID, the system can jump straight to the right partition instead of scanning irrelevant data.

Access Patterns

Do most queries target single records, small ranges, or large scans? Partitioning should reflect these patterns.

Latency Expectations

Real-time? Near real-time? "We don't care, it runs overnight"? Smaller, fine-grained partitions are better for low-latency interactive queries. Larger partitions are more efficient for batch processing and help reduce metadata overhead during scans.

Geography & Compliance Needs

Does your data need to stay in a particular region for legal or performance reasons (think GDPR, CCPA, multi-region replication)? If compliance requires data locality, your partitioning strategy must enforce those boundaries explicitly, possibly through region-based partitioning keys.

Think 2+ years ahead. Changing a partitioning strategy on a live system is painful — it's expensive, slow, and often involves downtime. It's like getting a bad tattoo: seems like a good idea at first, but later on you're filled with regret.